by: matthew timmins

Background: Science as a whole values the ability to repeat experiments with consistent results, a laudable goal that may reduce the likelihood of false reporting and random chance. Unfortunately, many fields suffer from a lack or replication studies published in peer-reviewed journals due to little attention, non-significant or opposing results, and so on, particularly in psychological science. In a large-scale attempt to replicate 100 different psychological studies, the Open Science Collaboration (2015) found weaker, yet similar results in approximately half of the replications compared to original studies based on 5 different indicators. This may be disheartening to scientists, because it suggests that few studies a replicable and even fewer will be able to show the same strength as original studies. However, some critics note that there may be major methodological flaws with the replications in the Open Science Collaboration study, such as the use of vastly different sample populations (Israeli versus American) and procedures (consequences of military service versus consequences of a honeymoon) (Gilbert, King, Pettigrew, & Wilson, 2016). Instead, Gilbert and colleagues (2016) point to the Many Labs study (Klein et al., 2014), which they argued followed the original study procedures more carefully and found higher rates of replication. This debate continued and some of the Open Science Collaboration pointed to flaws in Gilbert and colleagues’ critique, eventually pointing to an obvious consideration in psychological science: humans, culture, and society are not static entities and so “true” replication is impossible (Anderson et al., 2016). If true replications of psychological research are impossible and replication studies in general are scarcely published, what can researchers do to strengthen the claims of original research?

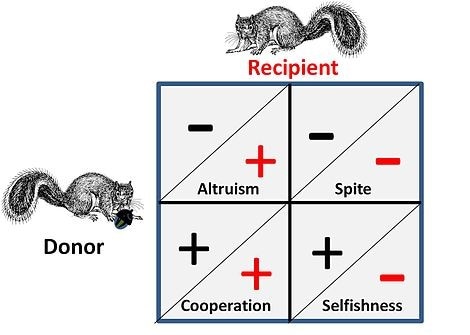

One potential solution is to use conceptual replications rather than direct replications. In other words, a study observing depression in a college sample may be conceptually replicated by observing prolonged sadness and feelings of hopelessness or worthlessness in a community sample of emerging adults. Lynch Jr. and colleagues (2016) argue that such successful conceptual replications allow for overlapping studies to support an overarching construct found in multiple settings, while failure to replicate may suggest the presence of alternative explanations or highlight potentially distinctive constructs. Adopting the view of conceptual replications allows for positive implications of both successful and failed replications. Multiple successful conceptual replications support an omnibus construct that remains consistent under a variety of situations. In contrast, failure to replicate may help generate alternative explanations or point to specific mechanisms that influence a more dynamic construct. Either outcome may be utilized to generate more research and to develop the foundation for real-world applications for psychological research.

Even with conceptual replications, non-significant results often go unpublished, which is a widely-known flaw in the current psychological science literature. To aid in the use of conceptual replications, particularly those that fail to replicate an original study due to non-significant results, some researchers have called for registering a multitude of replications as part of a larger project, similar to the Open Science Collaboration and the Many Labs studies (Nosek & Lakens, 2014). By utilizing registered replications, an overall picture of the topic may be added to the shared knowledge, and the consequences of failed conceptual replications may not be overlooked.

Conclusion: As psychological science cannot utilize exact replications for a variety of reasons, some scientists have claimed that there may be a scientific crisis within the field. However, the use of conceptual replications may be a suitable replacement. Conceptual replications allows for the use of different operationalizations of the same construct; thus, both the strength and mechanisms may be examined under a wide range of situations with successful or failed replications. To aid in the distribution of failed and non-significant replications, registered replications may increase the amount of published replications.

Key words: conceptual replications, replication registries, psychological science

References

- Anderson, C. J., Bahník, Š., Barnett-Cowan, M., Bosco, F. A., Chandler, J., Chartier, C. R., Zuni, K. (2016). Response to Comment on “Estimating the reproducibility of psychological science.” Science, 351(6277), 1037–1037. https://doi.org/10.1126/science.aad9163

- Gilbert, D. T., King, G., Pettigrew, S., & Wilson, T. D. (2016). Comment on “Estimating the reproducibility of psychological science.” Science, 351(6277), 1037–1037. https://doi.org/10.1126/science.aad7243

- Klein, R. A., Ratliff, K. A., Vianello, M., Adams, R. B., Bahník, Š., Bernstein, M. J., Nosek, B. A. (2014). Investigating Variation in Replicability. Social Psychology, 45(3), 142–152. https://doi.org/10.1027/1864-9335/a000178

- Lynch Jr., J. G., Bradlow, E. T., Huber, J. C., & Lehmann, D. R. (2015). Reflections on the replication corner: In praise of conceptual replications. International Journal of Research in Marketing, 32(4), 333–342. https://doi.org/10.1016/j.ijresmar.2015.09.006

- Nosek, B. A., & Lakens, D. (2014). Registered Reports. Social Psychology, 45(3), 137–141. https://doi.org/10.1027/1864-9335/a000192

- Open Science Collaboration. (2015). Estimating the reproducibility of psychological science. Science, 349(6251), aac4716. https://doi.org/10.1126/science.aac4716

RSS Feed

RSS Feed